The Philosophy of Active Measurement

Synthetic Monitoring Series — Part 6

The Philosophy of Active Measurement

Passive monitoring waits for your infrastructure to report a problem. Active measurement asks the infrastructure whether it has one — before anyone else does.

That distinction sounds subtle. In practice, it changes everything about how quickly problems get caught, how completely they get diagnosed, and how much confidence your team has in the data they’re acting on. This post covers the principles, the tradeoffs, and the operational mechanics of building a monitoring strategy around active measurement.

Start Here: What the Videos Cover

▶ Video 13 — “The Philosophy of Active Measurement”

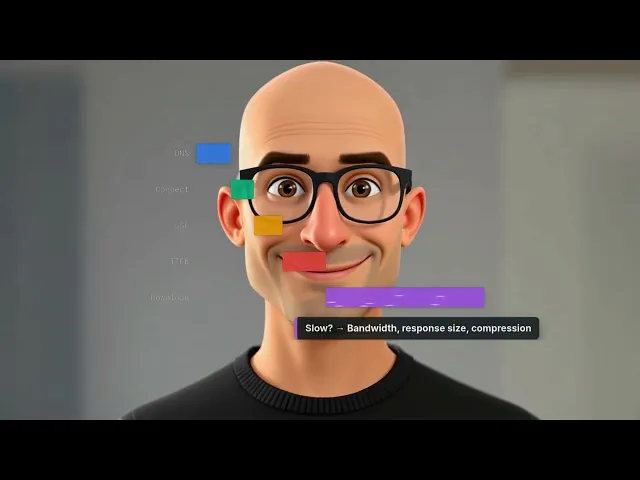

▶ Video 14 — “Anatomy of a Check — The Five Phases of an HTTPS Request”

▶ Video 15 — “Check Intervals — How Often Is Often Enough?”

▶ Video 16 — “Distributed Probes — Why Location Matters”

The 3 AM Scenario

3:00 AM

No users are online. No traffic is flowing. Your passive monitoring sees nothing — because there’s nothing to see.

A synthetic probe sends its scheduled check. The API returns a 500 error. An alert fires.

By 3:05 AM, your on-call engineer is investigating — hours before the first customer would have noticed.

This is the core argument for active measurement. Passive monitoring is dependent on traffic. No traffic means no signal, which means no detection. Active measurement is independent of traffic — it generates its own signal, on a schedule, whether or not anyone is using the service.

The Three Strengths of Active Measurement

Active monitoring has three structural advantages over passive approaches that hold true regardless of what you’re testing or how complex your infrastructure is.

Strength | What It Means in Practice |

|---|---|

Continuous | Runs on a schedule regardless of user traffic. The 3 AM outage gets caught even when no one is online. |

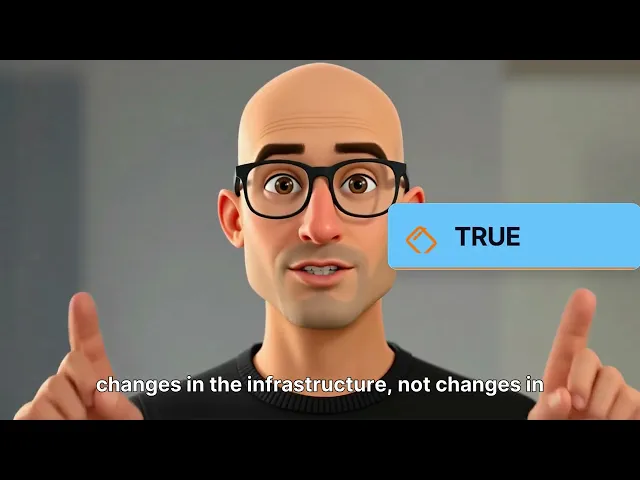

Controlled | Tests from known, fixed locations give you repeatable baselines. Changes in results mean changes in infrastructure, not changes in user behavior. |

Consistent | The test itself doesn’t vary. You can compare results across days, weeks, and months with confidence. |

The tradeoff is coverage: you only measure what you configure. A problem on an endpoint you’re not monitoring will not be detected. This is not a flaw to engineer around — it’s a constraint to design for, by being deliberate about what you choose to monitor and why.

Active, Passive, and Polling: Why You Need All Three

Active measurement works best alongside passive monitoring and device polling, not as a replacement for either. Each approach reveals something the others cannot.

ACTIVE | PASSIVE | POLLING |

|---|---|---|

Synthetic Checks | NetFlow / Traffic | SNMP / Device |

Availability and performance from the user’s perspective. Catches outages before users report them. | Real traffic patterns, bandwidth utilization, and who’s talking to whom. Reveals actual usage, not simulated usage. | Device health: CPU, memory, interface status, hardware errors. The internal state of your equipment. |

Together, these three approaches provide complete visibility. Active monitoring tells you what users experience. Passive monitoring tells you what’s actually flowing. Device polling tells you what your equipment is doing internally. None of them alone tells the full story.

Check Intervals: The Math Behind “How Often?”

Your check interval determines the shortest outage you can reliably detect. The Nyquist principle applies: your check interval must be at most half the shortest outage duration you need to catch.

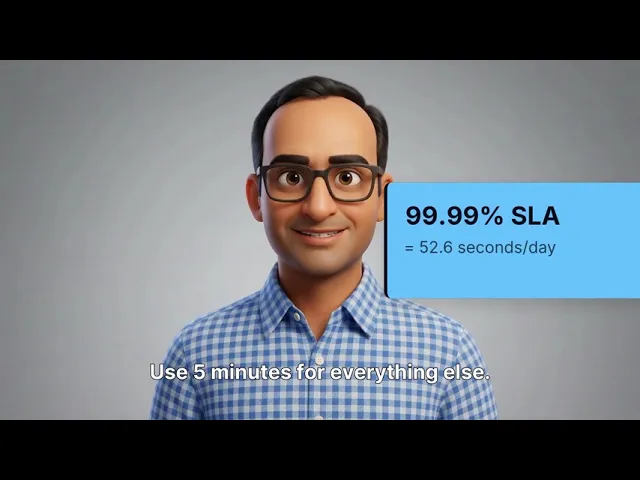

If your SLA promises 99.99% uptime, you have roughly 52 seconds of allowed downtime per day. A 5-minute check interval will miss a 3-minute outage entirely. The math is unforgiving.

30 sec | Critical SLA Endpoints APIs tied to financial SLAs, login flows, payment processing. Anything where 99.99% uptime has contractual teeth. |

60 sec | Important Production Services Core application endpoints, customer-facing APIs. The default for most production monitoring. |

5 min | Standard Monitoring Internal services, staging environments, non-critical endpoints. Appropriate where brief outages are tolerable. |

1 hr | Slow-Changing Signals Certificate expiry tracking, DNS record validation. Things that change slowly but matter greatly when they do. |

24 hrs | Periodic Validation Compliance checks, configuration audits. Verification that things are still as expected, not continuous monitoring. |

Distributed Probes: Why a Single Location Is Always Wrong

Your single monitoring probe in US-East says everything is green. Meanwhile, all of your European customers can’t reach your service — a submarine cable cut, a CDN misconfiguration, or an ISP routing problem that only affects traffic from certain regions.

One probe, one perspective. And that perspective can be completely wrong for a significant portion of your users.

Three probe selection strategies

Strategy | How It Works | Best For |

|---|---|---|

Round-robin | Probes take turns running each check cycle | Distributing load; getting varied perspectives over time |

Geographic nearest | The probe closest to the target runs the check | Representative measurement for that target’s primary users |

All-probe simultaneous | Every probe checks at the same time | Global health heatmap; instantly shows regional vs. universal problems |

Where to place probes

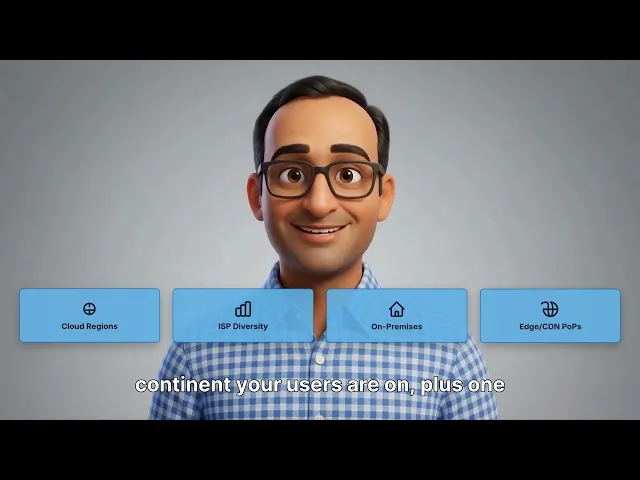

Minimum viable deployment: one probe per continent your users are on, plus one on each major ISP you depend on. Expand based on what you find. Probe placement should follow your user distribution — not your office locations or your cloud regions alone.

Synthetic monitoring is opinionated by design. It asks the network a specific question, on a schedule, from specific locations, and demands a clear answer. That’s not a limitation — it’s the feature. The discipline of deciding what to check, how often, and from where is what separates monitoring that informs from monitoring that creates noise.

Next in the Series

Part 7 — Probe Architecture, P2P Testing, and Path Intelligence. How probes stay resilient during outages, how probe-to-probe testing reveals your internal network’s true health, and how to find bottlenecks hop by hop.

Synthetic MonitoringActive MeasurementCheck IntervalsDistributed ProbesSLA MonitoringNetwork ObservabilitySREInfrastructure Monitoring

About Parlon

Parlon is an infrastructure observability platform built for enterprise teams operating complex, hybrid environments. Parlon combines active synthetic validation, real-time telemetry normalization, and learning-based alerting into a single platform — shifting operations from firefighting to foresight. Learn more at parlon.io.